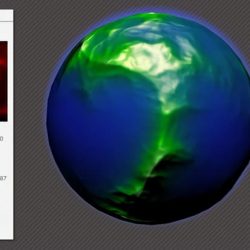

Spherical perlin noise

Jag är inte riktigt färdig med att experimentera med 3d perlin-brus. En annan cool funktion som jag inte har sett i Flash är att linda in bruset på en sfär sömlöst. För att få texturen att lindas sömlöst utan förvrängning så kan vi använda brusets 3d-karaktär på ett intressant sätt. Om vi utvärderar 3d-positionen för varje punkt på ytan av en sfär så kan vi få bruset i den specifika